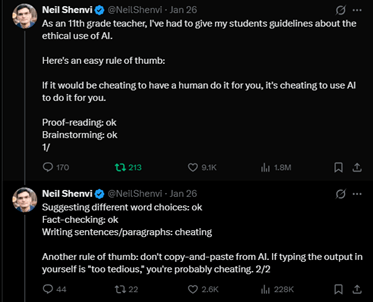

A few months ago, one of my Tweets went viral, eventually garnering 1.8 million views.

I had written the following:

As an 11th grade teacher, I’ve had to give my students guidelines about the ethical use of AI. Here’s an easy rule of thumb: If it would be cheating to have a human do it for you, it’s cheating to use AI to do it for you.

Proof-reading: ok

Brainstorming: ok

Suggesting different word choices: ok

Fact-checking: ok

Writing sentences/paragraphs: cheating

Another rule of thumb: don’t copy-and-paste from AI. If typing the output in yourself is “too tedious,” you’re probably cheating.

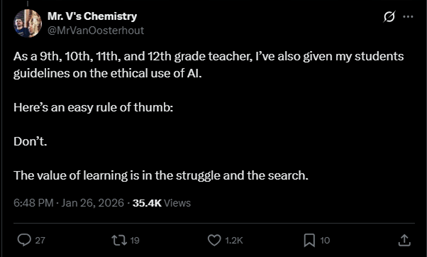

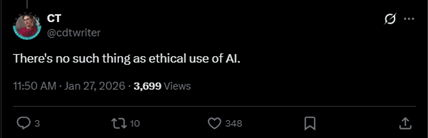

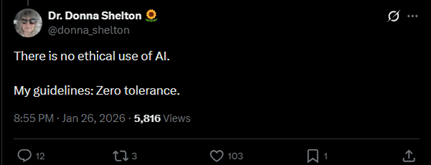

Responses were dramatically polarized. Many fellow teachers commented approvingly, saying that they had implemented similar policies in their own classrooms. But others made statements like these:

As a 9th, 10th, 11th, and 12th grade teacher, I’ve also given my students guidelines on the ethical use of AI. Here’s an easy rule of thumb: Don’t. The value of learning is in the struggle and the search.

There is no ethical use of AI. My guidelines: Zero tolerance.

There’s no such thing as ethical use of AI.

Perhaps even more interesting was the fact that these reactions didn’t fall along predictable partisan lines. Pro-AI and anti-AI reactions came from conservatives, progressives, Trump lovers, Trump haters, atheists, and evangelicals, with no discernible pattern.

So what should educators think of AI, particularly Christian homeschool educators?

What is AI?

AI is nothing more than a category of software. Ontologically (i.e., in its nature), it is no different than any other program your computer runs, whether Microsoft Word, Google Chrome, or Minesweeper. Although AI is a very broad category that encompasses everything from image analysis to workflow processing, I’ll specifically focus on LLM (large language models) which are central to discussions of AI in education.

Technologically, LLMs are very (very, very) advanced versions of the text prediction software used by your iPhone’s text messaging or by Google’s search bar. Text prediction allows computers to correctly extrapolate from the letters “h-o-s” to “hospital” or “hostel” or “hostess.” In the same way that autocomplete programs are trained to recognize and predict patterns in words, LLMs are trained to recognize and predict patterns in sentences and paragraphs.

Given this description, the notion that AI is or will ever be conscious or sentient is false. AI is an inanimate object, like any other machine or piece of software. It does not truly know things or have self-awareness any more than your dictionary or cell phone does.

Necessary Cautions

Though AIs are not ontologically different than other programs, they are significantly different in other ways.

Most obviously, LLMs will make cheating far easier. Today, LLMs routinely outperform humans on standardized tests and can write essays that –like it or not– are better than those of most college students. The sudden widespread availability of internet pornography warped the consciences of a generation of young adults; the widespread availability of a method for indetectable cheating will likely do the same.

Apart from the immorality of cheating, LLM misuse will take a tremendous toll on the intellectual development of students. Like Cliff’s Notes or SparkNotes, LLMs can effortlessly generate summaries of assigned readings. Complicated texts can be “dumbed down.” Response questions can be auto-generated. When it is used as nothing more than a “homework machine,” ChatGPT will destroy our students’ ability to read, write, and think clearly. Even worse, digital boyfriends and girlfriends (to say nothing of sex bots) are likely to become more common and more socially acceptable. Believing that a machine loves you is tragic and dangerous. Our children need to be strongly warned of the havoc this delusion will wreak in people’s lives.

Finally, education is about more than just the transmission of information; it also involves the formation of the student’s character. Human teachers can exemplify virtue. Robots cannot.

I take all these cautions very seriously. Used carelessly, LLMs will severely harm our children’s education. Yet the same can be said of innumerable technological advances that preceded AI, from the printing press to the personal computer to the internet. Misuse of technology, even grossly immoral misuse of a technology, does not make that technology inherently sinful.

The Permissibility of AI

So what case can be made that LLMs are morally permissible?

Let’s first begin by noting that technology itself is treated as morally neutral in the Bible. Advances like the introduction of musical instruments (Gen. 4:21), metal-working (Gen. 4:22), ship building (Gen. 6:14-16), writing (Ex. 17:14), brick-making (Ex. 11:3-4), chariot-building (Ex. 14:7), spinning yarn (Ex. 35:25-26), and jewelry-making (Gen. 24:22) are taken for granted. These are never presented as inherently unclean, even when they are first mentioned in connection with evil (see Gen. 4:17, Gen. 4:21, Gen. 4:22, Gen. 11:3-4, Ex. 14:7). Regardless of their origins, they are used in worship (Ex. 31:4-5, 1 Kings 7:13-14) and their absence is presented as a deficit (1 Sam. 13:19). Consequently, it’s difficult to argue that the Bible is explicitly or even implicitly anti-technology.

The continuity between LLMs and other forms of technology thus serves as an argument that LLMs are also not inherently evil. Ironically, the strongest proponents and the strongest opponents of AI both tend to treat it as a kind of sentient superbeing. It isn’t. As I argued earlier, precursors to LLMs have existed for decades, from text-based computer games like Zork and Seastalker in the 1980s to Microsoft’s Clippy in the 1990s to Amazon’s “You Might Also Like…” product suggestions in the 2000s. All these innovations offered users the illusion of human-to-human interaction; the only difference is that LLMs are better at it.

This continuity also explains why many philosophical arguments against LLMs prove too much. Consider claims that AI will necessarily weaken our cognitive abilities. Or that they will replace human-to-human interactions. Or that they will erase beauty. Or that they are an attempt to achieve idolatrous autonomy from God. These arguments can be and have been leveled against many other forms of technology.

For example, in Phaedrus, Socrates famously lamented that the invention of writing would weaken our memory and replace an ensouled, speaking human teacher with a dead, silent letter. The smallpox vaccine was decried as an attempt to evade God’s sovereignty and to unnaturally extend our earthly lives. Sterile printed texts replaced beautiful illuminated manuscripts. If we press such arguments against AI, we must consistently apply them to all technology.

In fact, these arguments demonstrate a third point: technology has always involved tradeoffs. Socrates was, in fact, correct that the widespread use of writing would weaken our memory. But the benefits of recording and preserving the riches of human knowledge for millennia (including Socrates’ dialogues!) far outweighed the real loss of our ability to recite Homeric epics. Likewise, advances in, say, industrial agriculture have produced real downsides: the rise of junk food, the disappearance of family farms, the alienation of humans from nature. Yet few people would want to return to a time when the vast majority of children were malnourished or when a famine or drought could wipe out an entire civilization.

Similar conclusions have been reached by numerous Christian leaders across various Christian traditions. The Southern Baptist Convention’s Ethics and Religious Liberty Commission, Pastor John Piper, the Archbishop of Canterbury, and the Vatican have all made statements affirming that AI can be used morally to promote human flourishing. While they have all offered cautions about the unique role of humanity and the importance of ethical boundaries, none of them have judged AI to be inherently wicked or ethically impermissible.

Using LLMs Effectively

I suspect that many homeschoolers are hesitant to use AI because they only conceive of it as a “homework machine,” so here’s an example of how I used LLMs as a tool in my own education.

Last year, I planned to learn Latin using the Henle textbooks from the Classical Conversations curriculum. I wanted to practice on my iPhone while I was at my children’s cross country practices, but electronic resources were minimal. So I told ChatGPT to write a flashcard program for me. I had one working in a few days, and I used it all summer.

As the school year began, I commenced weekly translations of Caesar and Cicero. I’d translate each passage by hand, a process that took me several hours. At the end, I’d have a very rough translation. But was it accurate? Unfortunately, the Henle answer key proved extraordinarily unhelpful. It rendered the English highly non-literally and idiomatically, with no explanation whatsoever.

So I again turned to ChatGPT. It provided me with a word-for-word literal translation along with explanations of parts of speech, declensions, conjugations, and relevant grammar rules. The computer often produced several pages of English explanation for every sentence of Latin.

Needless to say, my use of ChatGPT in this way did not impair my ability to learn.

How else can ChatGPT function as a tool rather than as a crutch? Try using it to:

- Check spelling and grammar. ChatGPT can identify contextual errors that traditional tools miss.

- Find synonyms. ChatGPT will automatically provide connotations for words, teaching students shades of meaning that a physical thesaurus omits.

- Perform internet searches. AI is often much more effective than traditional search engines in finding material from imprecise prompts.

- Fact-check your writing. Many people worry about ChatGPT’s factual inaccuracies, but I have found it generally reliable when it is asked a specific question about general knowledge, like historical dates or mathematical equations. Moreover, you never have to take ChatGPT’s answers at face value. If it flags a purported error, by all means, get a second opinion. Treat it like you’d treat a human research assistant!

- Explain confusing answer keys or work step-by-step through problems.

- Brainstorm and troubleshoot ideas for, say, a science fair or art project.

- Generate worksheets, problem sets, quizzes, and tests.

If you doubt the utility of LLMs in these areas, I suggest simply logging onto ChatGPT and trying it out.

Using LLMs Responsibly

Like all tools, LLMs can be misused. Your student can use the internet to find primary sources or to cut and paste from Wikipedia. He can use a thesaurus to learn the meaning of new words or to select synonyms at random. He can use answer keys to understand his mistakes or to cheat. Therefore, your student’s access to LLMs, like his access to the internet or to answer keys, should be limited by his stage of development and maturity. We don’t hand a calculator to a kindergartner as a “tool to help him learn addition.” However, we also don’t forbid a calculator to a high-schooler on the grounds that “we want him to learn addition.”

Which brings me back to my original guideline with a slight modification: If it would be unethical or developmentally inappropriate to have a human do it for you, it’s unethical or developmentally inappropriate to use AI to do it for you.

Of course, there are always trade-offs. No one is morally obligated to use LLMs in their students’ education and the rejection of LLMs will probably produce some actual benefits. A student who spends months learning Javascript to write a Latin flashcard program will learn both Javascript and perseverance. But he will probably learn less Latin.

In the end, wisdom is required not just from students, but from their teachers. We must discern when LLMs are appropriate and beneficial and when they will help rather than hinder our children’s education. There is no “one size fits all” answer, which is something homeschooling parents have long understood.

For a long, moderated discussion of AI and education with Susannah Black Roberts, Annie Crawford, and Brian Dellinger, see this video.

Related articles: